entropy.ubuntu.com giveth, and taketh away

A closer look at what data is sent to entropy.ubuntu.com on Cloud instance boot

Routine Test

At Chaser, we routinely test a variety of real-world setups through the DiscrimiNAT Firewall. It helps keep on top of implementation subtleties by different vendors and identify any regressions early as we improve the product.

FQDN filter for Ubuntu on GCP egress, shall we?

So we fire up Ubuntu Bionic Beaver LTS this time, with egress allowed to 0.0.0.0/0 on all ports.

These observations were made on deploying a Ubuntu 18.04 LTS Minimal VM with image name ubuntu-minimal-1804-bionic-v20201123 in the europe-west2 region of Google Cloud.

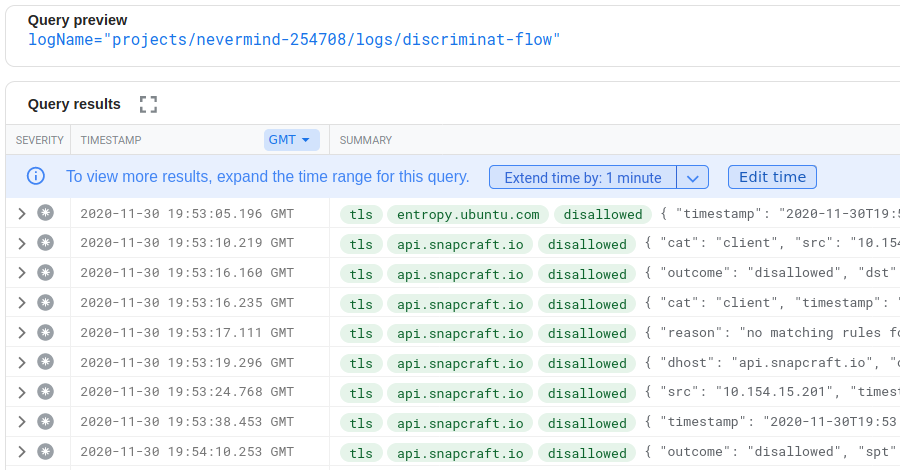

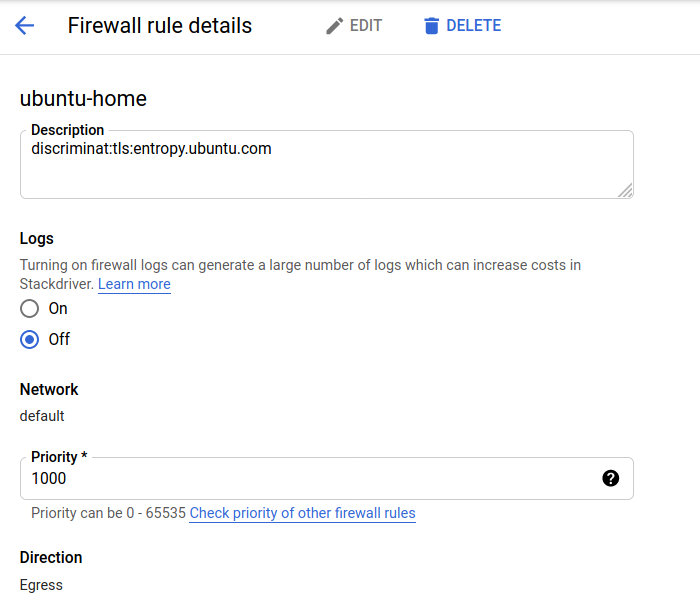

Flow Logs

As was expected, some amount of internet-bound traffic, but nowhere near as much as Windows was trying.

entropy.ubuntu.com...? Is it what at first glance it appears to be? If it is indeed getting more entropy for us from somebody else's Cloud, was it needed? Many more questions pop into our head, but we suppress them for now by deciding to have a look at what it's all about. We create a bastion and log in.

joe@my-app:~$

As soon as we log in, the OS tries to reach out to the internet again. api.snapcraft.io continues to nag, but with the suppression of changelogs.ubuntu.com we might have missed a welcome message on log on.

With some basic web search, we find out it's the pollinate client in Ubuntu – the package of which creates a user account and installs a SystemD service. The man page is reasonably well-documented, so we decide to give it a go in manual, interactive mode. But first, we allow this FQDN through DiscrimiNAT.

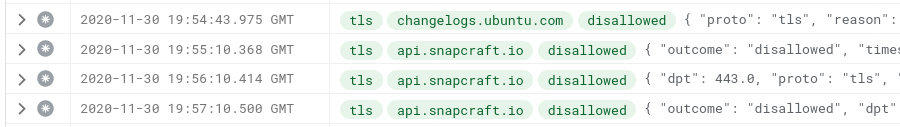

We stick in discriminat:tls:entropy.ubuntu.com in the description field of the associated firewall rule. More on that syntax here.

Manual Run

joe@my-app:~$ sudo su - pollinate -s /bin/bash

pollinate@my-app:~$ pollinate --testing --device - > /tmp/rand

pollinate@my-app:~$ ls -lh /tmp/rand

-rw-r--r-- 1 pollinate daemon 64 Nov 30 20:04 /tmp/rand

Hooray! We have 64 bytes of random data.

pollinate itself appears to be a shell script, which calls curl under the hood and feeds in these 64 bytes to /dev/urandom thereby enriching a system's entropy from a truly external source. Have we deprived our server of entropy? Have we deprived our server of good quality entropy? We decide to dig in a bit deeper and begin by leveraging the aptly-named --curl-opts argument to pollinate.

pollinate@my-app:~$ pollinate --curl-opts "--trace-ascii /tmp/curl-trace.txt"

<13>Nov 30 20:01:11 pollinate[1998]: client sent challenge to [https://entropy.ubuntu.com/]

<13>Nov 30 20:01:11 pollinate[1998]: client verified challenge/response with [https://entropy.ubuntu.com/]

<13>Nov 30 20:01:11 pollinate[1998]: client hashed response from [https://entropy.ubuntu.com/]

<13>Nov 30 20:01:11 pollinate[1998]: client successfully seeded [/dev/urandom]

pollinate@my-app:~$ cat /tmp/curl-trace.txt

...

20:01:11.942345 => Send header, 397 bytes (0x18d)

0000: POST / HTTP/1.1

0011: Host: entropy.ubuntu.com

002b: User-Agent: cloud-init/20.3-2-g371b392c-0ubuntu1~18.04.1 curl/7.

006b: 58.0-2ubuntu3.10 pollinate/4.33-0ubuntu1~18.04.1 Ubuntu/18.04.5/

00ab: LTS GNU/Linux/5.4.0-1029-gcp/x86_64 Intel(R)/Xeon(R)/CPU/@/2.20G

00eb: Hz uptime/494.84/962.80 virt/kvm img/build_name/minimal img/seri

012b: al/20201123

0138: Accept: */*

0145: Content-Length: 138

015a: Content-Type: application/x-www-form-urlencoded

018b:

20:01:11.942374 => Send data, 138 bytes (0x8a)

0000: challenge=b93b56beff9f684f44302a3d6f79fdfe606f77e66aac7b7a4f59f9

0040: 2353948e779879315531688f88571eb8c9325950fc2b48b3949c392b9d9b8e05

0080: 3084121e40

20:01:11.942397 == Info: upload completely sent off: 138 out of 138 bytes

...

Whoa! The User-Agent seems a bit loaded. We run pollinate again with its handy --print-user-agent option.

pollinate@my-app:~$ pollinate --print-user-agent

cloud-init/20.3-2-g371b392c-0ubuntu1~18.04.1 curl/7.58.0-2ubuntu3.10 pollinate/4.33-0ubuntu1~18.04.1 Ubuntu/18.04.5/LTS GNU/Linux/5.4.0-1029-gcp/x86_64 Intel(R)/Xeon(R)/CPU/@/2.20GHz uptime/783.01/1537.80 virt/kvm img/build_name/minimal img/serial/20201123

Closer Look

Let's look at each of the data individually:

cloud-init/20.3-2-g371b392c-0ubuntu1~18.04.1 – Version numbers of a certain package most likely.

curl/7.58.0-2ubuntu3.10 – Definitely the version of curl used.

pollinate/4.33-0ubuntu1~18.04.1 – Version of pollinate itself.

Ubuntu/18.04.5/LTS – OS major and minor version.

GNU/Linux/5.4.0-1029-gcp/x86_64 – Kernel.

Intel(R)/Xeon(R)/CPU/@/2.20GHz – The platform CPU this was run from.

uptime/783.01/1537.80 – The first number is elapsed uptime in seconds; the second number is idle time summed up across all cores in the elapsed uptime.

virt/kvm – The virtualisation as detected.

img/build_name/minimal – Ubuntu-specific build name.

img/serial/20201123 – Ubuntu-specific build number.

There's definitely enough data in there to pinpoint the patch level of the OS (and time since the last reboot). Coupled with the source IP, it can be traced to a particular region of the particular Cloud. Can the IP address lead to identifying the organisation or related forward DNS zones? Not straightforward or always possible, but has happened in the past.

Digging into some commit history of the source code, there are some tell-tale signs of how these fields can be useful on the server-side.

# Construct a user agent, with useful debug information

> # Very similar to Firefox and Chrome

link 🤨 Sure. If you say so.

add uptime/idletime to user agent to help detect abuse, LP: #1638552

link 🤨 LP #1638552 doesn't go into much detail of what and how. Perhaps because of the “This bug affects 1 person” note.

Ubuntu minimal images include build_name as 'minimal', so the stated reason for not including 'server' is now invalid.

link 🤨 TBH we weren't expecting pollinate and snapd to be in the minimal image at all, but okay.

Additionally, we collect up data in /etc/cloud/build.info. This info can be used to see how often images are refreshed or if an image is an official ubuntu image.

link 🤨 Seems like we've progressed from just getting some fresh, quality entropy.

The domain of entropy for Linux is not devoid of bugs and controversies:

- The first one that comes to mind is the infamous SystemD bug with AMD CPUs

- To trust CPU Random Number Generators or not was diverted to Distros

- Linux To Better Protect Entropy Sent In From User-Space looks like it can alleviate the risk from accepting this source of entropy that we are looking at.

We also know from this bug report that low entropy can stall a system pretty early on in the boot process. pollinate.service comes in pretty late since it needs outbound network connectivity at least. It is before the SSH service though that may need to generate its keys the first time around.

joe@my-app:~$ cat /lib/systemd/system/pollinate.service

[Unit]

Description=Pollinate to seed the pseudo random number generator

Before=ssh.service

After=network-online.target

ConditionVirtualization=!container

ConditionPathExists=!/var/cache/pollinate/seeded

[Service]

User=pollinate

ExecStart=/usr/bin/pollinate

Type=oneshot

[Install]

WantedBy=multi-user.target

Description feels a bit lacking.

The concerns around entropy.ubuntu.com have been discussed on the internet before. The most succinct summary, from our search, lies in the email thread [Cryptography] Security of Ubuntu RNG pollinate? from 2016.

We decide to give it a go without it.

Without It

joe@my-app:~$ sudo apt-get purge pollinate snapd ubuntu-release-upgrader-core

An image properly sealed with all due care and private key material wiped off comes up just fine and in the usual amount of time. We even seem to have a decent level of entropy at hand,

joe@my-app:~$ cat /proc/sys/kernel/random/entropy_avail

1157

And the flow logs are quite quiet.

Questions Remain

There remains a question about the quality of entropy. There are tests designed to test the quality of random numbers, and perhaps at a hackathon, we'll run those repeatedly for an entire weekend.

There is also the question about all that data in the User-Agent header. Is all of it really necessary for monitoring and debugging the service?

We hope you enjoyed this Stackdriver and Linux exercise, armed now with the knowledge that a modern firewall can prevent a lot of undesirable behaviour emanating from programs we trust to run in the Cloud.

Do watch our 2-minute demo on how DiscrimiNAT integrates with Firewall Rules on Google Cloud.

Edit 1: User jlgaddis from Hacker News shared their thoughts on motd.ubuntu.com (another host Ubuntu instances contact every now and then), in a discussion around the SolarWinds supply chain issue and its C2 channels here.

Edit 2: User sgorf from Reddit pointed to an FAQ from 2014 on the matter, written by Dustin Kirkland, in a thread here.

Discuss on Hacker News | Discuss on Reddit | Discuss on Twitter | Discuss on LinkedIn